Quiet Light for Future Data Centers

The deluge of data we transmit everyday across the globe via the internet-enabled devices and services has required us to look towards more efficient power, bandwidth and physical space solutions to the technologies that underlie our modern online lives and businesses.

“Much of the world today is interconnected to data centers for everything from business to financial to social interactions,” said Daniel Blumenthal, a professor of electrical and computer engineering at UC Santa Barbara. The amount of data now being processed is growing so fast that the power needed just to get it from one place to another along the so-called information superhighway constitutes a significant percentage of the world’s total energy consumption, he said. This is particularly true of fiber optic interconnects — the part of the internet infrastructure tasked with getting massive amounts of data from one location to another and between machines inside the data center.

“Think of interconnects as the highways and the roads that move data,” Blumenthal said. There are several levels of interconnects, from the local types that move data from one computer server to another, to versions that are responsible for linkages between data centers. The energy required to power these interconnects alone is almost 10% of the world’s total energy consumption and climbing, thanks to the growing amount of data that these components need to turn from electronic signals to light, and back to electronic signals. The energy needed to switch the electronic data and to keep the data servers cool also adds a great deal to total power consumption.

“The amount of worldwide data traffic is driving up the capacity inside data centers to unprecedented levels and today’s engineering solutions break down,” Blumenthal explained. “Using conventional methods as this capacity explodes places a tax on the energy and cost requirements of optical communications between physical equipment, so we need drastically new approaches.”

As the demand for additional infrastructure to maintain the performance of the superhighways increases, the physical space needed for all these components and data centers is becoming a limiting factor, creating bottlenecks of information flow even as data processing chipsets increase their capacity to what could be a whopping 100 terabytes per second for a single chip in the not too far future. This level of expected scaling was unheard of not just a handful of years ago and now it appears that is where the world is headed.

“The challenge we have is to ramp up for when that happens,” said Blumenthal, who also serves as director for UC Santa Barbara’s Terabit Optical Ethernet Center, and represents UC Santa Barbara in Microsoft’s Optics for the Cloud Research Alliance.

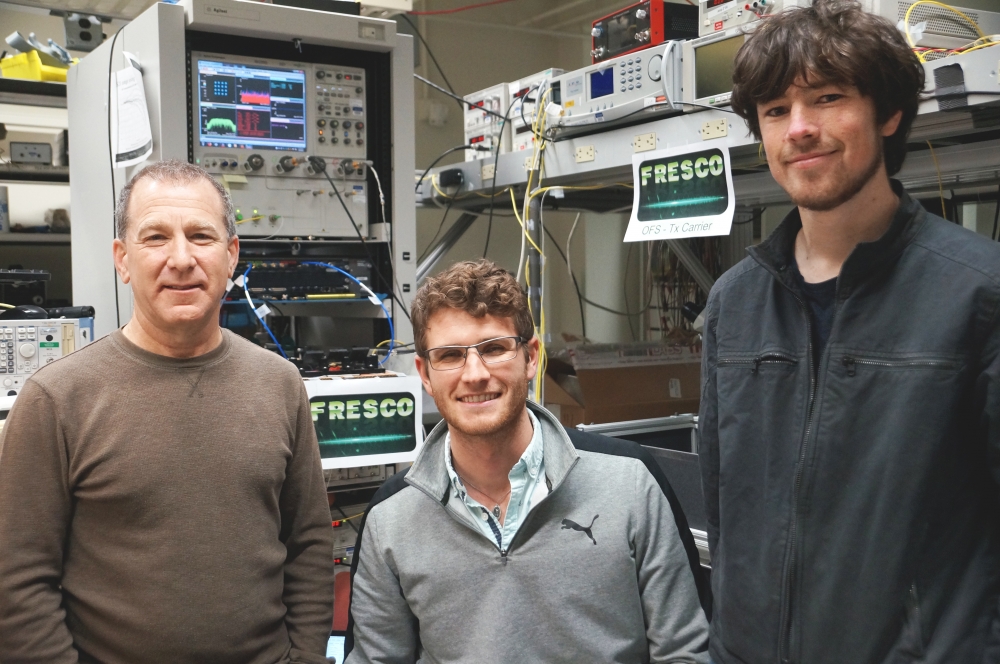

This challenge is a now job for Blumenthal’s ARPA-e project called FRESCO: FREquency Stabilized COherent Optical Low-Energy Wavelength Division Multiplexing DC Interconnects. Bringing the speed, high data capacity and low-energy use of light (optics) to advanced internet infrastructure architecture, the FRESCO team aims to solve the data center bottleneck while bringing energy usage and space needs to a sustainable and engineerable level.

The effort is funded by ARPA-e under the OPEN 2018 program and represents an important industry-university partnership with emphasis on technology transition. The FRESCO project involves important industry partners like Microsoft and Barefoot Networks (now Intel), who are looking to transition new technologies to solve the problems of exploding chip and data center capacities.

The key ingredients to the FRESCO approach, according to Blumenthal, are to shorten the distance between optics and electronics through co-integration, while also drastically increasing the efficiency of transmitting extremely high data rates over few fibers using the extreme stability of “quiet light” used at the transmitting and receiving end of the interconnect.

From Big Physics to Small Chips

FRESCO can accomplish this goal by bringing the performance of coherent optical wavelength division multiplexed (WDM) technology — currently relegated to long-haul transmission fiberoptic cables — to within the data center and co-locating both optic and electronic components on the same switch chip. Removing much of the power consuming components from long haul coherent communications while maintaining the efficiency and capacity is key to moving this much needed bandwidth to inside the data center, where 10s of thousands of servers need to be interconnected.

“The way FRESCO is able to do this is by bringing to bear techniques developed for large-scale physics experiments to the chip scale,” Blumenthal said. It’s a departure from the more conventional laser that simply turns on and off and is located in removable module located in the faceplate of the fiber optic switches. Incorporation of these large scale physics techniques on chip reduces the power consuming portions of coherent WDM, particularly the digital signal processor (DSP). Additionally, co-location of the optics and electronics in a tightly integrated package removes the energy it takes to otherwise move data large distances on a circuit card and between electronics and pluggable optical faceplate modules.

Optical signals can be stacked in a technique known as coherent wave-division multiplexing, which allows data to be sent over different frequencies — or colors — over a single optical fiber with very high efficiency coherent modulation akin to that used in cell phone transmission technology. However, because of space constraints, Blumenthal said, the traditional measures used to process coherent long-haul optical signals, including electronic DSP chips and very high bandwidth circuits, have to be removed from the interconnect links in order to use them inside the data center.

FRESCO does away with these components with an elegant and powerful technique that “anchors” the light at both transmitting and receiving ends, creating spectrally pure stable light that Blumenthal has coined "quiet light."

“In order to do that we actually bring in light stabilization techniques and technologies that have been developed over the years for atomic clocks, precision metrology and gravitational wave detection, and use this stable, quiet light to solve the data center problem,” Blumenthal said. “Bringing key technologies from the big physics lab to the chip scale is the challenging and fun part of this work.”

Specifically, he and his team have been using a phenomenon called stimulated Brillouin scattering, which is characterized by the interaction of light — photons — with sound produced inside the material through which it is traveling. These sound waves — phonons — are the result of the collective light-stimulated vibration of the material’s atoms, and they act to buffer and quiet otherwise “noisy” light frequencies, creating a spectrally pure source at the transmitting and receiving ends. The second part of the solution is to anchor or stabilize these pure light sources using optical cavities that store energy with such high quality — almost like that of an atom — that the lasers are anchored in a way that allows them to be aligned using low-energy, low bandwidth electronic circuits used in the radio world, but operating with optical data rates.

The act of alignment of a transmitted signal to a local laser in fiber coherent communications requires that the light frequency and phase from the two lasers signals are kept aligned at the receiver so that data can be recovered. This normally requires high power analog electronics or DSPs, which are not viable solutions for bringing this capacity inside the data center (there are 100,000s of fiber connections in the data center, as compared to 10s of connections in the long-haul). Also, the more energy and space the technologies inside the data center take, an equivalent amount of power gets consumed on the cooling of the data center.

“There is very little energy needed to just keep the lasers at the transmitter and receiver aligned to and finding each other,” Blumenthal said of FRESCO, “similar to that of electronic circuits used for radio. “That is the exciting part — we are enabling a transmission carrier at 200 THz to carry data using low-energy simple electronic circuits, as opposed to the use of DSPs and high bandwidth circuitry, which in essence throws a lot of processing power at the optical signal to hunt down and match the frequency and phase of the optical signal so that data can be recovered.” With the FRESCO method, the lasers from the the transmitting and receiving ends are “anchored within each other’s sights in the first place, and drift very slowly on the order of minutes, requiring very little effort or energy to track one with the other,” according to Blumenthal.

On the Horizon, and Beyond

While still in early stages, the FRESCO team’s technology is very promising. Having developed discrete components, the team, consisting also of Yale University, Northern Arizona University, NIST, Colorado University Boulder and Morton Photonics, is poised to demonstrate the concept by linking those components, measuring energy use, then transmitting the highest data capacity over a single frequency with the lowest energy to date on a frequency stabilized link. Future steps include demonstrating multiple quiet light frequencies using a technology called optical frequency combs that are also integral to atomic clocks, astrophysics and other precision sciences. The team is in the process of integrating these components onto a single chip, ultimately aiming to develop manufacturing processes that will allow for transition to FRESCO technology.

This technology is likely only the tip of the iceberg when it comes to possible innovations in the realm of optical telecommunications.

“We see our chipset replacing over a data center link what today would take between four to 10 racks of equipment,” Blumenthal said. “The fundamental knowledge gained by developing this technology could easily enable applications we have yet to invent, for example in quantum communications and computing, precision metrology and precision timing and navigation.”

“If you look at trends, over time you can see something that in the past took up a room full of equipment become something that was personally accessible through a technology innovation — for example supercomputers that became laptops through nanometer transistors,” he said of the disruption that became the wave in personal computing and everything that it enabled. “We know now how we want to apply the FRESCO technology to the data center scaling problem, but we think there also are going to be other unforeseen applications too. This is one of the primary reasons for research exploration and investment without knowing all the answers or applications beforehand.”