An Important Step in Artificial Intelligence

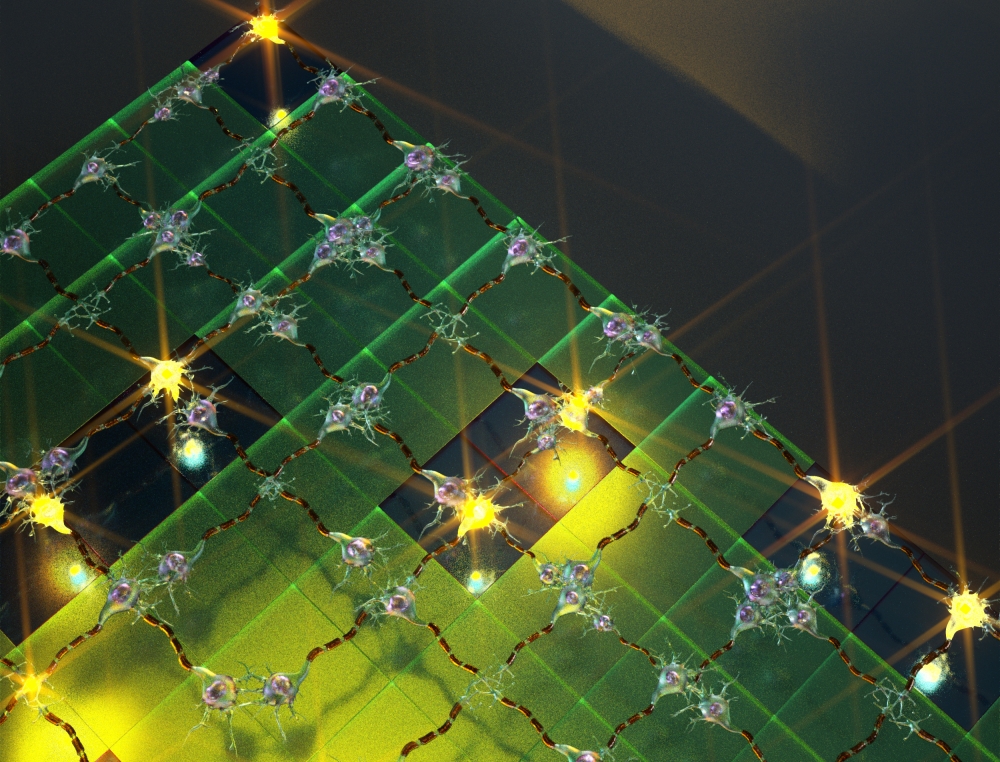

In what marks a significant step forward for artificial intelligence, researchers at UC Santa Barbara have demonstrated the functionality of a simple artificial neural circuit. For the first time, a circuit of about 100 artificial synapses was proved to perform a simple version of a typical human task: image classification.

“It’s a small, but important step,” said Dmitri Strukov, a professor of electrical and computer engineering. With time and further progress, the circuitry may eventually be expanded and scaled to approach something like the human brain’s, which has 1015 (one quadrillion) synaptic connections.

For all its errors and potential for faultiness, the human brain remains a model of computational power and efficiency for engineers like Strukov and his colleagues, Mirko Prezioso, Farnood Merrikh-Bayat, Brian Hoskins and Gina Adam. That’s because the brain can accomplish certain functions in a fraction of a second what computers would require far more time and energy to perform.

What are these functions? Well, you’re performing some of them right now. As you read this, your brain is making countless split-second decisions about the letters and symbols you see, classifying their shapes and relative positions to each other and deriving different levels of meaning through many channels of context, in as little time as it takes you to scan over this print. Change the font, or even the orientation of the letters, and it’s likely you would still be able to read this and derive the same meaning.

In the researchers’ demonstration, the circuit implementing the rudimentary artificial neural network was able to successfully classify three letters (“z”, “v” and “n”) by their images, each letter stylized in different ways or saturated with “noise”. In a process similar to how we humans pick our friends out from a crowd, or find the right key from a ring of similar keys, the simple neural circuitry was able to correctly classify the simple images.

“While the circuit was very small compared to practical networks, it is big enough to prove the concept of practicality,” said Merrikh-Bayat. According to Gina Adam, as interest grows in the technology, so will research momentum.

“And, as more solutions to the technological challenges are proposed the technology will be able to make it to the market sooner,” she said.

Key to this technology is the memristor (a combination of “memory” and “resistor”), an electronic component whose resistance changes depending on the direction of the flow of the electrical charge. Unlike conventional transistors, which rely on the drift and diffusion of electrons and their holes through semiconducting material, memristor operation is based on ionic movement, similar to the way human neural cells generate neural electrical signals.

“The memory state is stored as a specific concentration profile of defects that can be moved back and forth within the memristor,” said Strukov. The ionic memory mechanism brings several advantages over purely electron-based memories, which makes it very attractive for artificial neural network implementation, he added.

“For example, many different configurations of ionic profiles result in a continuum of memory states and hence analog memory functionality,” he said. “Ions are also much heavier than electrons and do not tunnel easily, which permits aggressive scaling of memristors without sacrificing analog properties.”

This is where analog memory trumps digital memory: In order to create the same human brain-type functionality with conventional technology, the resulting device would have to be enormous — loaded with multitudes of transistors that would require far more energy.

“Classical computers will always find an ineluctable limit to efficient brain-like computation in their very architecture,” said lead researcher Prezioso. “This memristor-based technology relies on a completely different way inspired by biological brain to carry on computation.”

To be able to approach functionality of the human brain, however, many more memristors would be required to build more complex neural networks to do the same kinds of things we can do with barely any effort and energy, such as identify different versions of the same thing or infer the presence or identity of an object not based on the object itself but on other things in a scene.

Potential applications already exist for this emerging technology, such as medical imaging, the improvement of navigation systems or even for searches based on images rather than on text. The energy-efficient compact circuitry the researchers are striving to create would also go a long way toward creating the kind of high-performance computers and memory storage devices users will continue to seek long after the proliferation of digital transistors predicted by Moore’s Law becomes too unwieldy for conventional electronics.

“The exciting thing is that, unlike more exotic solutions, it is not difficult to imagine this technology integrated into common processing units and giving a serious boost to future computers,” said Prezioso.

In the meantime, the researchers will continue to improve the performance of the memristors, scaling the complexity of circuits and enriching the functionality of the artificial neural network. The very next step would be to integrate a memristor neural network with conventional semiconductor technology, which will enable more complex demonstrations and allow this early artificial brain to do more complicated and nuanced things. Ideally, according to materials scientist Hoskins, this brain would consist of trillions of these type of devices vertically integrated on top of each other.

“There are so many potential applications — it definitely gives us a whole new way of thinking,” he said.

Konstantin Likharev from the Department of Physics and Astronomy at Stony Brook University also conducted research for this project. The researchers’ findings are published in the journal Nature.